Power User, Meet C64 OS.

A new Operating System for the Commodore 64.

C64 OS enhances new hardware to get the most out of your C64.

Version 1.04 now available. From $59 CAD.

Order Now

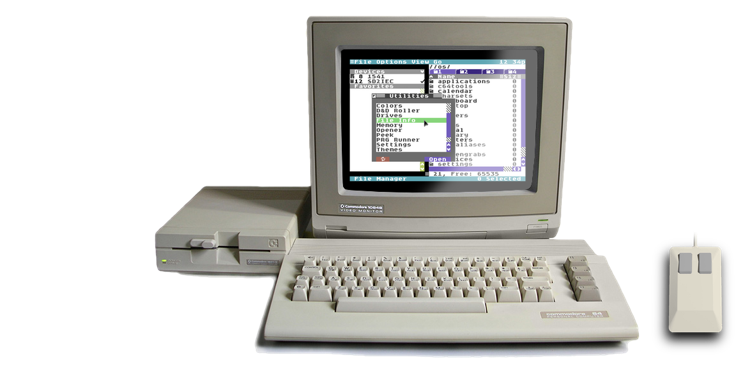

C64 OS is a modern operating system add-on that balances power and ease of use, while respecting the computer's modest specifications. C64 OS lets you do things with your Commodore 64 that Commodore would never have imagined.

Over five years in the making, C64 OS is a platform ideally suited for a whole new class of application. Applications ready to take advantage of mass storage, wireless networking, and more RAM, in an elegant, modular and expandable system, with a beautiful user experience you'll feel comfortable with from the first click.

“A match made in pure 6502 assembly language heaven.”

A licensed copy of C64 OS v1.04 includes:

- Access to the 3,500 word online Getting Started Guide

- Access to the complete 75,000 word online User's Guide

- A 46-page full-color professionally printed User's Guide Supplement

- A pre-installed copy of C64 OS v1.04 on a System Card (32 MB or 64 MB SD Card)

- An installation archive and install tools for C64 OS v1.04 for other storage media

- App Launcher, File Manager, C64 Archiver, 3 additional Applications and 30 Utilities, plus 10 KERNAL modules, 12 Drivers, 18 Libraries, 24 Toolkit classes, and 8 additional Tools

- Two high-quality promotional C64 OS vinyl stickers

- Access to ongoing patches, bug fixes and feature updates to C64 OS

- Access to new non-commercial Applications and Utilities for C64 OS

- Access to a C64 OS Discord server for support, help, bug reporting, news and community

The 64 MB System Card also comes with these bonuses:

- A pre-installed CMD HD hard drive image of C64 OS v1.04 for use in VICE

- A pre-installed IDE64 hard drive image of C64 OS v1.04 for use in VICE

- A collection of C64 graphics files that can be viewed with Image Viewer

- A collection of popular HVSC SID tunes that have been relocated for use in C64 OS

C64 OS is available for purchase as a physical product, and

Starts at just $59 CAD*

* $59 CAD is approximately $45 USD, for the Starter Bundle. Limited Supply.

Not including shipping or applicable sales tax.

Testimonials

I have used C64 OS literally every day since I received it, even if it was just to turn on my C64 and see it load, and it brings a smile to my face that it exists every time! 😊

The app launcher itself has definitely proved to be something that makes it significantly easier for me to use my C64 for finding and running various applications in my day, where often my time at my desk is limited. Thank you again! Adam K0FFY — November 2022 — X (Twitter) DM

C64 OS is the perfect blend of modern OS concepts with mechanical sympathy for the classic Commodore 64! It has truly rekindled my interest in the design and implementation of computer operating systems. Not only that, it's an absolute delight to use, and sometimes fools you into thinking you're working on a machine designed this millennium!

But wait, there's more! If you want to write software for your Commodore 64, C64 OS does almost all of the heavy lifting for you! A comprehensive KERNAL API, object-oriented programming, and a UI Toolkit inspired by OpenStep all combine to make 6502 assembly language application development a dream! Matt Stine — September 2023 — Discord DM

Place your order now

Choose your bundle:

Starter Bundle (32 MB System Card. Now twice the capacity of the v1.0 Starter Bundle.)

Standard Bundle (64 MB System Card. Most popular.)

Starter Bundle (32 MB System Card)

The starter bundle comes with a 32 MB System Card. A complete copy of C64 OS is pre-installed and ready to be used with an SD2IEC. In addition, an installation archive and install tools are included which can be used to install C64 OS on other supported storage media or on VICE. You'll also get a professionally printed full-color 46-page User's Guide and two high quality vinyl C64 OS promo stickers.

The 32 MB System Card is enough to hold the capacity of a hundred and ninety-five 1541 disk sides. This gives you plenty of room to use C64 OS comfortably as well as to install future updates, and add new Applications and Utilities as they become available. The System Card comes pre-partitioned with a main partition for C64 OS and a second partition for disk images. This allows you to mount disk images and use C64 OS to access and manage their content all from a single SD2IEC storage device.

Please review the minimum hardware requirements before you purchase C64 OS. You can also consult the frequently asked questions for additional information about hardware requirements and compatibility.

What's in the bundle?

- 32 MB System Card with 2 partitions, suitable for introductory use

- 46-page full-color printed User's Guide

- Pre-installed copy of C64 OS v1.04 for use with SD2IEC

- An installation archive and install tools for other storage media

- Two high-quality vinyl 2" by 1" C64 OS promotional stickers

- An OpCoders Inc. Business Card

- Supply of the Starter Bundle is limited.

$59 CAD + shipping + applicable sales tax*

|

|

Your order has been received!

Thank you for purchasing the C64 OS Starter Bundle.

|

Standard Bundle (64 MB System Card)

The standard bundle comes with a 64 MB System Card. A complete copy of C64 OS is pre-installed and ready to be used with an SD2IEC. An installation archive and install tools are included which can be used to install C64 OS on other supported storage media. In addition, a CMD HD hard drive image (DHD) and an IDE64 hard drive image (HDD) is included each with a pre-installed copy of C64 OS, ready for use with VICE. You'll also get a professionally printed full-color 46-page User's Guide and two high quality vinyl C64 OS promo stickers.

The 64 MB System Card is enough to hold the capacity of hundreds of 1541 disk sides. You'll have room to use C64 OS, install future updates, and add new Applications and Utilities as they become available, and still have plenty of space to install your favorite games, store all your documents, and have access to media files like pictures and music.

The System Card comes pre-partitioned with a main partition for C64 OS and a second partition for disk images. This allows you to mount disk images and use C64 OS to access and manage their content all from a single SD2IEC storage device. The System Card also comes packed with over 150 graphics files, and a selection of the top rated SIDs from HVSC.

Please review the minimum hardware requirements before you purchase C64 OS. You can also consult the frequently asked questions for additional information about hardware requirements and compatibility.

What's in the bundle?

- 64 MB System Card with 2 partitions, space for documents, games and media

- 46-page full-color printed User's Guide

- Pre-installed copy of C64 OS v1.04 for use with SD2IEC

- Pre-installed CMD HD hard drive image for use in VICE

- Pre-installed IDE64 hard drive image for use in VICE

- An installation archive and install tools for other storage media

- A collection of C64 graphics files that can be viewed with Image Viewer

- A collection of popular HVSC SID tunes that have been relocated for use in C64 OS

- Two high-quality vinyl 2" by 1" C64 OS promotional stickers

- An OpCoders Inc. Business Card

$64 CAD + shipping + applicable sales tax*

|

|

Your order has been received!

Thank you for purchasing the C64 OS Standard Bundle.

|

Watch C64 OS in Action

C64 OS Video Demonstrations

Curious to know more about what C64 OS can do? What it's like to use it? Watch these two video demonstrations recorded for Commodore User's Europe showing the state of C64 OS and some of its useful and powerful features.

The first presentation is from June 2021. It shows many features that were complete at that time, but many features in the final version 1.0 were not yet available.

The second presentation is from one year later, June 2022. The second presentation is much closer to the version 1.0 release and also includes a lengthy Q&A session at the end.

Presentation #1: June 2021 (23 minutes)

C64 OS makes a C64 feel fast and useful

Presentation #2: June 2022 (51 minutes)

C64 OS nearing v1.0 release

Presentation #3: December 2023 (56 minutes)

Advanced new features in C64 OS

Checkout the complete online User's Guide, for a detailed discussion of many more features and capabilities of C64 OS.

C64 OS Reviews and Unboxings

Reviews are different from demos. In the demos above, C64 OS is presented on a tight schedule with a prepared set of talking points to move quickly and show off the latest key features and abilities.

In a review or an unboxing, the host may be trying out C64 OS for the very first time, and is not an experienced C64 OS user who knows all the tricks and shortcuts. Therefore, reviews are a sort of adventure, exploring novelty and discovering what might be found. Some things mentioned may be slightly inaccurate and the hardware upon which the review is being run may be slower or less powerful than other possible hardware combinations.

Retro Recipes C64 OS Review (40 minutes)

4.5 out of 5 chips review of C64 OS v1.0

Amy and Taylor unbox C64 OS (12 minutes)

An eager and fun first impressions of C64 OS v1.0

C64 OS Interviews

Listen to a podcast interview about C64 OS, at New Game Old Flame with:

- Andy

- Diego, and

- Greg Nacu

Listen to a podcast interview about C64 OS, at The Hanselminutes Podcast with:

- Scott Hanselman, and

- Greg Nacu

C64 OS + Hardware Collections

New software, new hardware, all new capabilities.

A C64 fan's dream come true.

The following hardware collections are a sample from a myriad of possible combinations. They give a broad overview of the progression from the simplest and least expensive way to get more out of your C64 by running C64 OS, to the more advanced and modern collections that look fantastic and also offer the most powerful opportunities for C64 OS to take advantage of.

Barebones Collection

- A C64 Breadbin

- A Joystick

- An SD2IEC

- And C64 OS

The only new kit needed is an SD2IEC—these come in a multitude of form factors, some cost as little as $45 USD—and that's all you need to get into C64 OS.

Explorer Collection

- A C64 Breadbin

- A 1351 Mouse

- An SD2IEC

- And C64 OS

If you want to explore C64 OS further, the most obvious next step is to switch the joystick out for a mouse. If you can't find a 1351 there are many adapters for modern mice.

Hipster Collection

- A Commodore 64c

- A 1351 Mouse

- A Cableless SD2IEC

- And C64 OS

Step up to the sleeker C64c but keep the 1351 for that retro hipster feel. A cableless SD2IEC eliminates the serial cable for a tighter more modern look.

Serious Collection

- A Commodore 64c

- A 1351 Mouse

- The JiffyDOS KERNAL

- A Cableless SD2IEC

- And C64 OS

If you want to get serious about using your C64 with C64 OS, upgrading your KERNAL ROM to JiffyDOS is the first best step.

Modern Collection

- An Ultimate 64

- A PS/2 or USB Mouse with adapter

- The JiffyDOS KERNAL

- A Cableless SD2IEC

- And C64 OS

The Ultimate64 takes a JiffyDOS ROM image, offers an RTC and a 16MB REU. Pair it with a cableless SD2IEC and a USB mouse and MouSTer for a modern C64 OS experience.

Network Collection

- An Ultimate 64

- A PS/2 or USB Mouse with adapter

- The JiffyDOS KERNAL

- A Link232 WiFi Cartridge

- A Cableless SD2IEC

- And C64 OS

Take the final step by adding a highspeed serial port with built-in TCP/IP WiFi modem, and your C64 is ready to follow C64 OS as it marches into the future.

Don't miss these new pages:

C64 OS on Real Hardware and

C64 OS Media Resources.

Guides and Documentation

Jump in quickly with the Getting Started Guide.

Explore many features in more detail with the User's Guide.

|

Getting Started Guide |

User's Guide |

Programmer's Guide |

New or returning to the Commodore 64?

Are you new to the Commodore 64 and want to dive in and explore this culture-shaping classic computer? Or are you a returning user who had a C64 in your youth, and you've got the itch to relive old memories and start building new ones?

The Commodore 64 is a simple and fun machine, but it's a bit quirky and philosophically different from modern computers. Rather than read through old books to bring yourself up to speed, start with this:

|

An Afterlife User's Guide to the C64 |

Shipping and Sales Tax

| Destination | Shipping | Sales Tax | * Delivery Estimate |

|---|---|---|---|

| Canada | $4.10 CAD | 13% | 3 to 5 days |

| United States | $12.15 CAD | N/A | 7 to 10 days |

| International | $14.40 CAD | N/A | 7 to 14 days |

* Shipping delays may occur going to some destinations due to international relations

Return to Starter Bundle | Return to Standard Bundle︎

Last modified: Mar 22, 2024