NEWS, EDITORIALS, REFERENCE

Distributed Jumptable

I've suffered a minor setback, I'd say it put me back maybe a week. Our work office was broken into last weekend. Front window smashed. My 2016 MacBook Pro was stolen right off my desk. Fortunately I had most things backed up. I didn't lose anything Commodore related, or anything personal really. But I have had to spend some significant amount of time reconstructing my work environment on a new iMac.

Also, very frustratingly, I lost perhaps a month of development on my current work project. As the sole developer, I wasn't committing to SVN very regularly. Which is something I'm going to start doing, probably once a week, in light of the rude awakening that fires and spinning disk failures are not the only way to lose data. Now back to the post I was working on.

Sometimes I feel like I'm climbing a mountain 1 inch at a time. This post is about some changes I've made to how the system jumptable works, and how modules are linked together.

I've been here before, and wrote about this general theme of development in at least four previous posts: (In chronological order, oldest first.)

To recap, C64 OS uses the C64's KERNAL ROM, and adds its own KERNAL on top of that. The C64 OS KERNAL is divided across 10 modules. These are units of code that get assembled independently, and that export routines that can be called either by other modules, or by application code.

The set of modules has been fairly stable for a while now, and consists of:

C64 OS KERNAL

|

When assembled, all the modules pack together and end at $CFFF. As I write more code the amount of space the KERNAL consumes continues to grow down into memory. It currently ends somewhere around $A800. Which means it's approximately 10K. 6K overlaps where the BASIC ROM normally sits. And 4K in himem from $C000 to $CFFF.

The value of a jumptable

The issue I originally worked through, and blogged about in the posts linked above, was how to best get the code in one module to be able to locate the routines that need to be called that are assembled into other modules.

Why was this an issue at all? Let's say Memory is the module highest in ram, so Memory.o would be assembled to the very end of $Cxxx. The next module has to end where Memory.o starts. Let's say Memory.o assembles down to just 200bytes ($c8 bytes.) That means the next module down, let's say it's Math, has to end at $d000 - $c8 or $cf38. The size of Math.o is different than the size of Memory.o, the size of each modules depends on exactly what it implements. So, let's say Math.o is 300bytes. Now let's say Math starts with a routine, mul16 (16-bit multiply.) Some other module, or some application code, has to be able to call mul16. But in order to do so, it has to know where in memory mul16 actually starts.

If you figure out where mul16 ends up getting assembled to, and you hardcode that address into some other module or an application, then you've got a serious problem. What happens when you have to go back and make a change to the Memory module that causes it to become 20 bytes longer than it was before? All the modules lower than it in memory have to be shifted down by 20 bytes, and all the offsets you've hardcoded become wrong.

In steps the idea of a jumptable, a venerable solution. What you do is you put a jumptable at a fixed location, where each entry takes up a fixed amount of space: 3 bytes. (A 1-byte jmp or jmp() instruction, followed by a 2-byte/16-bit pointer.) You can name your jumptable entries, just as the C64 KERNAL has names for all its routines, like: setnam, setlfs, chkin, chkout, chrin, chrout, etc. You make sure your code always calls the jumptable entries, and then you keep the order of the routines in the jumptable stable (as stable as possible), and when the positions of modules shift around because they're under development and they're changing size, you just update the jumptable to point to the correct locations.

There are two problems with this though.

First, keeping the jumptable up to date quickly becomes labor intensive and tedious work. Any long and tedious process makes it less fun to work on a project.

Second, is the practical problem of finding the routine offsets within a module. It's similar to the problem of finding the start of a module, but it's within a module. So, let's say the File module has 6 routines: finit, ferror, fopen, fclose, fread and fwrite. Where does fclose begin, relative to the start of the File module as a whole? How do you get that information out and into the main jumptable?

Next step, export tables

I came up with a pretty decent solution, that worked pretty well for a while. When you assemble a source file, the assembler knows the addresses of labels within that file. But, after you've assembled a file and you end up with a a file that contains an executable block of code, all the information about the offsets to various routines within that file are lost. And looking through the code and calculating those offsets manually is very time consuming and contributes to the first of the two problems mentioned above.

Instead, I came up with a two pronged solution. The first prong is to build an exports table at the start of each module. Let's take File for example. It exports the 6 routines listed above. It has many routines besides those six that are called by the six main routines in order to implement their functionality. But only those six are meant to be exposed to the rest of the system. Therefore, the very top of the module gets a table of pointers like this:

Those labels get handily resolved at assemble time, and it becomes easy to find those routine start points within the module, as long as you know where the module starts. If you know where the file module starts, then you can find, say, ferror, like this: filestart+$02, or fwrite as: filestart+$0a

The second prong of the solution is to have each module know where it should be assembled to. To do this is a little bit labor intensive, but I've yet to find a better way. I assemble a given module to wherever, and note the final size of the output file. Then I build a header file, called modules.h, that gets included by every module. It looks somewhat like this:

How does this work? The module highest in memory (which happens to be the Memory module, pardon the name collision) doesn't quite end at $CFFF, because the main jumptable has to fit in there, but I'll leave that aside for a moment. For now let's just assume we know where the jumptable starts. Memory.o will then start at the start address of jumptable minus its own size, that's the $c8. But then the next module, say, Math, it needs to start wherever Memory starts minus its own size, that's the $d9. And so on. I have to manually keep up to date the assembled sizes of all the modules, but as long as I do that, this common include (modules.h), has a set of labels that indicates where each module needs to start.

Since each module's source includes this file, the Memory module sets its own start to smem: *=smem. And the File module sets its own start to sfil: *=sfil. And so on.

Next, of course, you have to build the jumptable itself. The jumptable is a separate independently assembled file, and it also includes modules.h It ends up looking something like this:

One issue, which is a bit laborious, is that this file also has to be manually kept up to date, with indirect jumps paired up with the number of exports found at the start of each module.

How it worked is pretty slick though! Each indirect jmp() jumps through a vector found at the start of the module. We know the module's start address from modules.h, plus an offset to the routine. Each offset is incremented by 2, because the exports table is a table of 2 byte pointers.

It works! It works, but. There is one more piece. Perhaps I should have said it's a 3 pronged approach. The third prong is the module header files. If Math needs to call a routine in Memory, then Math can call the jumptable entry for the Memory routine. But somehow, Math needs to know where the jumptable entry is.

Remember how in modules.h, the start of the module highest in memory is calculated as the start of jumptable minus the size of the module? Somehow we need to know how big the jumptable is. To know this we need to know how many entries are in the jumptable, where each entry is a 3-byte jmp (or indirect jmp.) Not only do we need to know how big each assembled module object file is, but we also need to know how many exports it offers, and thus how many jumptable entries it will require.

Up to now, I've used a few sample lines, but here is the actual modules.h file from C64 OS, as it was in June of 2018. The top section lists the jumptable offsets, starting at $D000 and subtracting multiples of three. For example, Memory exports 8 routines, Input exports 11, String exports 5 and so on. Each Jxxx constant holds the location in the jumptable of the jump entries for the module. In the lower section, the size of each module (manual updated) is subtracted from the start address of the preceding module.

Each module, as well as application code, needs to be able to find these jumptable entries. The solution for this is that each module has a header file, which can be included in the source of anything that wants to call an exported routine from that module. The header lists the names of the routines, as labels, set equal to the Jxxx address + an offset incrememnts of three.

Not only does this header file allow other code to jump through the jumptable to a routine in another module, but it also serves as the documentation for that module and its routines. I use a scheme of inputs and outputs (-> and <- respectively) with letters (A, X, Y, C) for the registers and carry flag. As well as some special ways of passing data, such as RegPtr to represent a 16-bit pointer, in X = Low Byte, Y = High Byte format. I also use RegWrd to mean the same thing, but when the 16-bit value is a data word but not a pointer. Or, if the routine takes inline arguments, I'll use A0, A1, etc as inline argument 0, 1, 2 etc. Here's how this looks for Memory.

That's cool. But it's a lot of overhead to keep all that up to date. Couldn't we just hardcode the addresses in the jumptable? And put those addresses into the header file manually? Yes we could, but this leads us to the main problem with the jumptable, more generally.

The KERNAL ROM has 39 entries in its jumptable. C64 OS adds over 60 more, and I'm still developing. C64 OS divides the routines into modules, thematically related units of code. Memory Management, File and Disk I/O, Input and Events, Menu and Status bar, etc. The KERNAL ROMs routines are grouped somewhat sensibly, but not entirely. Let's start with a look at those.

KERNAL ROM Overview in 60 seconds

Starting at the bottom of the table, ($FF81), and working up into memory ($FFF3).

The first six are related to system initialization: CINT, IOINIT, RAMTAS, RESTOR, VECTOR, SETMSG. These initialize the VIC and Screen Editor, initialize I/O devices, perform a RAM test, restore top and bottom of memory for BASIC, reset default KERNAL vectors, and enable printed system messages. You can imagine these being part of a module.

After that though, things start to become illogically ordered. SECOND, TKSA (send secondary addresses for talk and listen to the IEC Bus). Then MEMTOP, MEMBOT (manually set memory top and bottom, for BASIC use.) SCNKEY (scan the keyboard). SETTMO (set a timeout flag for IEEE bus card). Then all of a sudden you've got six more in a row that are closely related to SECOND and TKSA, but not together with them. ACPTR, CIOUT, UNTLK, UNLSN, LISTEN and TALK. These are all IEC serial bus related. Read in a byte, write out a byte, untalk, unlisten, listen and talk. The SCNKEY and SETTMO, and the memory routines seem oddly out of place.

Next we have 12 in a row that are mostly related to each other, but I question their internal order. READST (read status), SETLFS and SETNAM (Set Logical File Number, First and Second Addresses) and (Set the filename, or command string). OPEN and CLOSE (open and close a file, based on a logical file number). Then channel I/O routines: CHKIN, CHKOUT, CLRCHN, CHRIN and CHROUT. These are abstractions above the TALK and LISTEN level, for setting up the input and output channels. Clearing and restoring input/output to keyboard and screen. And reading individual characters in and sending individual characters out, on those channels.

LOAD and SAVE come next. They're a thin layer of abstraction above doing CHKIN and then doing repeated CHRINs, or doing CHKOUT and repeatedly calling CHROUT.

Then you get a bunch of things seemingly out of order again. SETTIM and RDTIM for setting and reading the system time. STOP checks if the user has pressed the stop key. GETIN is like CHRIN, but is mostly only for the keyboard. CLALL closes all files, this seems file related, but comes following the time calls and stop check. Then you've got UDTIM which updates the system time, separated from SETTIM and RDTIM.

And lastly, SCREEN, PLOT and IOBASE. The first two help abstract interaction with the screen to deal with the fact that the PET, VIC-20, C64 and others had different screen resolutions, and by default have screen memory at different addresses. And lastly I/O, ($dxxx) on a C64, is not in the same place on the other Commodore 8-bits.

Why can't those colors just be grouped together??!

Explaining the KERNAL ROM's Oddities

The Commodore KERNAL is more than just the C64's KERNAL. It was the core operating system (along with BASIC) of the entire Commodore 8-Bit line: PET, VIC-20, CBM-II, C64, PLUS/4, C128, etc. I don't know the full history of the KERNAL's development, but I do have a few insights. Here is a diagram of the evolution of the KERNAL ROM through these machines.

I found that diagram here: https://wikipedia.ma/commodore-kernal-historical-previous/, unfortunately the english is not quite normal, it reads like maybe it was machine translated before that technology got good enough. Consequently it's hard to understand, and that's a shame because I'm sure it's full of interesting historical detail.

This leads us finally to think about the problem of a jumptable. Once a jumptable address is set, say, $FFD2 is the address for CHROUT, thousands of eager developers start writing code that JSRs to $FFD2 to output a character. If you have any hope of maintaining compatibility, you have to keep CHROUT at $FFD2, essentially, forever. That compatibility can be between KERNAL revisions of the same machine, such as the C64 to the C64c, or a C64 with a JiffyDOS KERNAL, and extends to compatibility across machines, such as from VIC-20 to C64 to C128. SCREEN is a pretty obvious example. The whole point of SCREEN is that you can call it to introspect on the screen resolution of the machine you happen to be running on.

Most programmers, seemingly, preferred to simply put out multiple versions of their program, for C64 and C128, for example. But some programs, the utilities packaged with CMD hardware, for example, went to great pains to make one version run natively both on a C64 and a C128. FCOPY, for instance, the same version runs on both C64 and C128, and it is pretty convenient. Putting in that much effort seems to me to be rare, but the theory, at least, of the KERNAL and the jumptable was to facilitate compatibility across the 8-bit line.

It should be pretty obvious what happens. It can happen to internal development before the KERNAL is ever even released to the public, but it certainly happens after a public release. New routines can only ever be added to the bottom of the jumptable. The top of the table, the part that has already been publicly available in a commercial product release, has to stay the same. Otherwise, every new revision, and every new machine, requires developers to reassemble their programs, and users to get new versions. The result of these constraints is that over time the jumptable's entries become disorderly.

Further Complications in the C64 OS KERNAL

There is one obvious difference between the C64's KERNAL ROM and the KERNAL of a third-party OS; a third-party OS, like C64 OS, is loaded from disk (SD Card / Harddrive) into memory, and will hopefully come in many future versions. It's those versions that need to remain compatible with each other. The C64 KERNAL ROM, besides a JiffyDOS update, which not everyone has, has been the same for almost 40 years.

I don't know how exactly the Commodore KERNAL was originally programmed. Were they programming it on a bigger computer, with a cross assembler? Or were they writing it by hand, and plotting out the memory usage on paper and putting it in one ML instruction after the other? If the latter, it would have been something like the ultimate bootstrapping adventure. But one thing they wouldn't have run into is constraints on the number of labels their assembler could handle.1 If they manually calculated the address of where each routine begins, they could have any part of the KERNAL or BASIC make calls directly into any other part.

Besides the OCD-induced discomfort of having SECOND and TKSA separated from the other IEC bus routines in the jumptable, there is no technical issue with putting them wherever they fall.

In C64 OS, the routines for, say, File and Disk I/O all come from the File module, a single source code file. And the fact that those routines are grouped together in the jumptable is partially enforced by module.h calculating the offset into the jumptable for the File module's routines. The file.h header file, included by other modules and applications, finds the entries in the jumptable as sequential offsets from that single start address. It's really great for those of us who are consumed by code orderliness.

But, the rubber has hit the road.

I am no longer just working on KERNAL modules, I'm now working on the first and central C64 OS applications and the first several C64 OS utilities. These applications will be bundled with the OS, but they are structurally the same as any other application. They get loaded with the loader, they make KERNAL calls to get stuff done, and most importantly, they do not import modules.h.

If an application wants to use routines from the file module, it includes file.h and then does JSRs to the labels provided by that header.

Paper notes I use to record the size of each module, and its number of exports.

The problem is that, even though I'm starting to write applications and utilities, the KERNAL is not 100% complete. As you can see in my notes above, the number of modules, how big each one is, and how many routines they export continues to evolve. Which is fine, because any changes to the KERNAL simulate what it would be like for the application to deal with a major version change, from say 1.0 to 2.0.

At the moment, when I make a change to the KERNAL I usually break compatibility with the existing apps, which requires me to reassemble them. That's fine for me, because I have the source code to everything under the same umbrella. It is definitely not fine for any third-party applications. The whole point of the jumptable is so that, the user updates their OS version, the KERNAL undergoes many changes, but the jumptable stays backwards compatible, and a third-party application, already installed, continues to be compatible without requiring an update. Especially because, as we know, application developers move on, and their source code gets lost, and sometimes updates will never come.

What Happens in the C64 OS KERNAL?

This is a bit of an aside to describe what I'm actually working on, but I promise it'll come back and be relevant to the jumptable.

You might be wondering, so what's the problem? What is happening in the C64 OS KERNAL? Let's say I want to add a new routine to C64 OS. Real world example, the system's Status Bar has gained the ability to toggle through modes. One mode shows the current disk status, one mode shows a custom application status, and a third mode shows the currently open file. The currently open file is stored in a C64 OS File Reference, which is pointed to by the Open File Reference, which is a system variable stored in workspace memory. However, a File Reference isn't readily renderable, it needs some code to convert it to a string.

This got me thinking. C64 OS works with File References a lot. They're central to a lot of sophisticated behavior. And the Homebase Applications need to be able to store to disk (and restore from disk) file reference data. What that means is the in-memory File Reference structures need to be serialized and unserialized. Applications can use these routines to automatically (say, in the quit handler) serialize the file reference to their currently open file, and write that serialized reference to a file in their own app bundle. When the application is opened up next time, the app can look for the serialized reference file, and if its there, can unserialize it back into an in-memory File Reference, and then use that to reopen the document you were editing last. This kind of state-awareness2 is all part of an effort to have the OS dramatically improve the user experience.

If we're going to have routines to serialize and unserialize File References, it's an obvious win to use the existing serialize routine to convert the Open File Reference to a string, which can then be drawn into the status bar. That seralized format is like this:

8:5:playhouse.koa://pictures/koalas/The format is unique, but reminiscent of paths used by CMD devices, or SD2IEC, which in turn copied the format used by multi-mechanism disk drives from Commodore. It's differences though are designed to solve other problems.

Each element is separated by a colon. A colon is not allowed in a filename on Commodore file systems, (nor, incidentally, were they allowed in Classic Mac OS.) The order of the elements goes, Device:Partition:Filename:Path. You might ask why should the filename come before the path? There are a couple of reasons. A filename has a fixed length of 16 characters. So, in a File Reference structure, all the fixed length elements come first, and the path, the only variable-length element, comes last. This allows the filename to always be found at a fixed offset in the struct, which makes it much easier to find to send to the drive. The path also has a fixed start offset, so it's just as easy to find. Putting the serialized format in the same order as the elements of the in-memory File Reference makes it easier to convert to a from.

Plus, there is one more big benefit, and a happy coincidence. When displaying the Open File Reference in the status bar, the most important thing to see is the name of the file. The status bar is only as wide as the screen, which is 40 characters. If the filename followed the path, a long path would get cut off and you wouldn't see the filename at all. Modern macOS usually solves this by replacing parts of the path with an ellipsis. But, that takes a lot of extra code. With the format above, there is no extra code. The serialized format on disk is human readable, and can be drawn directly to the status bar without modification. You get to see the device, the partition,3 and filename of the currently open file, plus the start of the path. I like it.

Adding New Routines to the KERNAL

After going through that aside, we've got a new routine: FREFCVT, File Reference Convert. One routine with two modes. Set the carry to serialize a File Ref to a string, pass a RegPtr to the File Ref, get back a RegPtr to the string. Or, clear the carry to unserialize. Pass a RegPtr to a properly formatted string, get back a RegPtr to a freshly minted File Ref.

The question is, where to put this routine, in what KERNAL module? Obviously it'll go in the File module. So, the File Module gains a new export. But then the jumptable needs to be manually updated to list a new entry. Furthermore, that entry needs to follow the other File module entries. Not just for my OCD-pleasure, but because the file.h include dynamically computes the location of the new entry. Of course, this also necessitates updating modules.h, because it needs to know how big the jumptable is going to be, so other downstream offsets can be computed.

The problem gets worse. The jumptable sits highest in memory. If it gets longer, (no matter where you put the new jumptable entry,) then it pushes not just the new bigger File module down in memory, it pushes ALL the modules down in memory. They all have to be reassembled. So it doesn't much matter that the jumptable entries lower than those for File have also been bumped down. The next thing you know, I've got to reassemble all the KERNAL modules, plus the booter, plus the jumptable itself, and update modules.h and file.h. That's a LOT of work.

And we haven't even touched on the REAL problem. The real problem is that when the jumptable changes, it breaks compatibility with apps and utilities too. So, I end up having to reassemble basically everything, one... file... at... a... time.

Whenever a process that used to work becomes too laborious to be fun, I start searching for a different solution. But in this case, it's worse than just being labor intensive and time consuming, it just won't work for future versions of C64 OS.

Introducing the Distributed Jumptable

I rolled some thoughts around in my head for a while. I tried out a couple of different ideas, but I'm not going to discuss the ones that didn't work out.

At the top of each module is the vector table of its exports. The jumptable jumps through these vectors, by knowing where each module starts, and adding a 2-byte offset for each routine. It occured to me that, one really only needs to know where a module starts. If you could jump through a vector to a vector and jump through the second vector too, you'd be set. Unfortunately, the 6502 has no instruction that allows you jump through a double vector like that.

But then I got to thinking, the modules themselves don't need to jump through a vector to find the start of other modules, at all. The modules already include modules.h, which computes and defines the start labels for each module. And, because I'm in control of all the modules, any new version of C64 OS will simply have all the modules assembled together at the same time. A module, like say, File, that needs to allocate a page of memory by calling pgalloc in Memory, could do it directly like this:

JSR (smem+pgalloc)Perfect, right? That doesn't go through the main jumptable at all. Wait, not so fast. JSR doesn't have an indirect mode. Only JMP does. Damn. In order to JSR through a vector, you basically have to JSR to a JMP(), and in C64 OS every jumptable entry is an indirect JMP(). Let's stick with the module-to-module calls for a moment. Typically a module JSRs to a JMP(), through a vector at the top of the module. That's 3 bytes and 6 cycles for the JSR, plus 3 bytes and 5 cycles for the JMP(). Plus, it's 2 more bytes for the actual vector itself at the top of the module. That's 8 bytes and 11 cycles for the whole process.

But that seems unnecessary, at least for module-to-module, because each module already knows where each other module starts. Instead, we could change the vector table at the top of the module to be a regular jumptable. Those JMPs don't need to be indirect, because they're jumping straight to a label somewhere within that module. The offsets between routines at the top of the module becomes 3 now, instead of 2. But, to continue the example, File could do the following:

JSR smem+pgallocWhere pgalloc, along with all the other routines exported by Memory, is aligned with the new 3-byte offsets. This would mean 3 bytes and 6 cycles for the JSR, and 3 bytes and 3 cycles for the direct JMP, and there is no vector. So that's a total of just 6 bytes and 9 cycles. It's certainly a win for module-to-module calls. Now what about application to module calls?

An application (and anytime I say application here, I am also including utilities) needs to know where a module starts. You might think, oh, well that's easy, it can just include modules.h too, and you can just distribute modules.h for third-party developers. But, that won't do. Because remember unlike the KERNAL modules, that are assembled, packaged and distributed together, an application that is already out there, needs to maintain compatiblity with new versions of the KERNAL modules. Somehow the application needs a way to know where each module starts. Jumping through an vector to a vector, indirect-indexed would be ideal. But the 6510 cannot do that.

Here's the solution I came up with. In place of the main jumptable, which lists all the routines of all the modules, and changes length whenever a new routine is added, instead we put a module lookup table there. The table is a list of addresses to where each module starts. The order of the entries in this table would have to stay stable, though the order of the modules in memory would not. This lookup table can get longer in future KERNAL versions by adding new modules to the bottom of the table.

Here's the module lookup table, in memory and translated to paper for clarity.

Next, the application needs to know where to JSR to call a module routine. But it can't know where the module starts at assemble time, because it has to run against different versions of the KERNAL. In short, it needs some way of looking up where the modules are located at runtime. Or, more specifically at application loadtime. Well, that's what the new module lookup table is for. My idea then is to use the loader to modify the application at loadtime and to use the addresses in the lookup table to rewrite where the application will JSR to. But how can we realistically do THAT?

If you simply strew your code with a mix of JSRs to the C64's KERNAL ROM and your own routines in your application, along side calls destined for routines in C64 OS's KERNAL modules, you end up in a situation that is nearly as complex as relocating assembled code at runtime. It's very difficult, full of pitfalls, and requires a lot of code, computation and time, (especially on the C64, maybe not on a PC the way sidreloc works).

Instead, my solution is that the application should include its own jumptable that takes the place of the jumptable the system would normally provide. But with a few tweaks.

First, there are around 70 C64 OS KERNAL calls. A given application may only use a small subset of these, so the application's jumptable only needs to include entries for the calls it needs.

Second, the point of having a jumptable is that all of the external calls are clustered together in a known location, with a pattern of exactly 3 bytes per entry, so it is very easy for a loadtime routine modify this table. Here's an example of the table an application would include to call these C64 OS KERNAL routines.

What gets assembled into the application is actually not a set of JMPs, it's a set of 3 bytes of data. The first byte is the module's lookup table's low byte address. The addresses start at $D000, minus 2 for each module. The Memory module's entry is at $D000-2 ($CFFE), so, lmem is defined as $FE. The File module's entry is at $D000-12 ($CFF0), so lfil is defined as $F0. And so on. Following this byte is a word with a value for the offset of the routine in the jumptable at the top of that module. These constants are available in the headers for the modules, but what matters is that these don't change, even as we add new modules, and modules change sizes, and new routines are added to modules. As long as the jumptable provided by a module stays stable. It's just like a the normal system jumptable, but instead of one of them where all the routines from all modules are packed together, it is distributed into multiple jumptables, one per module.

The application's jumptable is surrounded by two elements. It starts with a label "extern", to identify the beginning of the table of external calls. And it ends with a single byte terminator, $ff. This is how the loadtime routine that processes this table knows where the table ends. Every C64 OS application has an init routine that is called automatically by the system after the application is loaded into memory. The init routine merely needs to include extern.s which brings in a short routine that is automatically run, just once, to process the extern table.

The routine starts at the extern label. Reads a byte. If it's $ff, it stops. Otherwise, it uses it as the low byte of a pointer to the module lookup table, which has to be entirely contained within $CFxx. That's enough room for 128 modules. (There will never be 128 C64 OS KERNAL modules, so that may as well be unlimited.) Next, it reads the module address from the lookup table, (little endian), and adds it (16-bit-style) to the .word in the extern table and replaces that word.

For example. lmem is $fe and pgalloc_ is, say, 18. The extern entry thus has three bytes: $fe,$12,$00 The $fe is read and set as the low byte of a pointer, whose high byte is hard set to $cf. The pointer is thus $CFFE. From $CFFE, a byte is read, it's $94 (see the image above of the lookup table paper transcription.) $94 is added to $12 and $A6 is written overtop of the $12 in the extern table. The next byte from the looktable is read, that's $CE, it's added with the carry to $00 from the extern table, and the result, $CE, is written overtop of the $00 in the extern table. Lastly, the initial byte, lmem ($fe) is replaced with $4C, an absolute JMP.

The routine loops and continues processing all the others in the table this way until it hits the $ff at the end of the table. $ff is never used for any actual lookup table offset, which are always divisible 2. ($FE,$FC,$FA,$F8...)

Advantages, Conclusions, Wrap Up

Are there any disadvantages? Yeah, there are always some disadvantages. One disadvantage is that the application actually has to concern itself with building its own jumptable. It is a bit of added burden on the application developer, and the application's source code. Although, in my, albeit early, experience working with this technique there is a small advantage gained in making it explicit which C64 OS KERNAL routines are used by an application. It used to be that I'd include the header for, say, the Service module, but then you'd have to search the code laboriously to figure out exactly what routines from Service are actually being used. Now, when you want to use a KERNAL routine, you add an entry in the extern table, and those are all grouped together in one place.

Let's think about memory usage for a moment. There used to be a jumptable, with close to 70 entries in it, 3 bytes each. Plus, each module needed an export table, 2 bytes each for all 70 routines. That's 70 * (3 + 2), or 350 bytes. That jumptable has been split into 10 places, so, it's still 70 * 3, but the exports tables are completely gone. That's 70 * 2, or 140 bytes less memory. We need the lookup table, that's 20 bytes, but we've still saved 120 bytes, or around a half a page. Now, you do need the additional extern table in the application, which takes up space. But, it's in application space, not KERNAL space. And moving things out of the KERNAL when possible seems to be a net good. Because, for example, an application that only makes a few KERNAL calls only needs to have those in its extern table. And each application can have a different subset of extern entries.

One last point on memory usage. Of course, you also need the routine to convert the extern table at loadtime. That's a handful of additional bytes. However, I'm also considering using a loader program, that will be loaded by the KERNAL, that will then do several tasks that are currently in the KERNAL, before itself being replaced by the application it loads in. So that those loadtime routines don't need to be permenantly in memory. The extern table conversion routine may well find itself migrated into that loader. When the time comes, I'll write a full post to talk about that.

There is a small difference in the execution times. One module routine calling another is faster because of the direct JMP, rather than the indirect JMP(). 3 cycles instead of 5 cycles, so 2 cycles shorter. This could be helpful when they add up. The main eventloop, for example, continuously calls routines in the Input, Timers and Screen modules. And the IRQ handler is constantly calling routines in the Input module. On the other hand, it's a bit slower for the application to call KERNAL routines, because there is a double absolute JMP, rather than a single indirect JMP(). But, it's small: 3 cycles + 3 cyles, as opposed to 5 cycles, it's only 1 cycle longer. But far more time is typically spent in the KERNAL making other KERNAL calls, than in the app making KERNAL calls, so, from a speed perspective it's also a net win.

And it actually kind of makes sense why. Rather than having every KERNAL call be an indirect JMP(), those runtime lookups are replaced by a fixed number of loadtime lookups.

I was originally willing to take a hit on speed and memory in order to solve the more pressing problem of keeping applications compatible with a changing KERNAL. But the irony is that the solution to the big problem has probably made things faster and could result in less memory usage. That's the beauty of software. This is also what it feels like to climb a mountain an inch at a time.

Part of the jumptable, distributed across modules.

Above is now an example of how the exports tables at the tops of two modules have been changed to simply be a set of absolute JMPs. Each module has its own, thus the jumptable is now distributed among 10 different modules.

You can also see that each module's start address is set as sinp (for Start Input) and sser (for Start Service). These still come from modules.h. However, modules.h is now much simpler. It no longer needs to track the number of exports from each module. As, the number of exports from the modules no longer affects a main jumptable, which in turn affects where the first module will begin. Here's how modules.h now looks.

modules.h now only lists module sizes.

Where once I needed to track on paper the size of each module, plus the number of exports of each, and put the number of exports modules.h, I no longer have to do that. In its place is a single label, sltb (for Start Lookup Table). The only time this changes, is if I change the number of modules, which is very rare.

Each module's header file has had to be updated to work with the new distributed jumptable. Here's an example of memory.h.

memory.h updated for the new distributed jumptable.

In an application's source code, I want the primary label, the one that follows the JSR in the main source, to be as clean and unadorned as possible. However, it needs to refer to a label that is used to represent that routine's offset. The offset labels, in the header file, therefore have a trailing underscore.

This allows the extern table to be structured like this:

memcpy .byte lmem

.word memcpy_

There is a clear parallel between the main label "memcpy" and the label that's in the .word "memcpy_". Then, of course, these header labels are incrementing by 3 now.

The label for the start of a module comes from modules.h. However, the header file has to provide a label for where to find the entry in the lookup table. It's just the low byte, as described earlier. But here it is. lmem = $ff-(2*1)+1. The 2*1 looks useless, but it's actually 2 times the assigned module number. Memory is 1, Input is 2, String is 3, etc. The assembler does like it if the subtraction underflows. In theory it's just $00 - (2 * module_number), but the assembler complains when the subtraction goes below 0. Instead, I compute it as $ff - ... and add 1 back on at the end. It's the same as where it would be if the assembler allowed it to rollunder.

That's it for now. I think I'm in a much better position to write apps that won't keep breaking whenever I make a small change to the KERNAL. And the whole system has become much more maintainable.

- Because, remember, I'm coding native. I'm use Turbo Macro Pro+REU. [↩]

- Admittedly inspired from iOS, but it's a great feature. [↩]

- If the device is a 1541, 1571 or 1581, or compatible, without partitions, the partition number is listed as 0. This is a Commodore DOS standard. ie. LOAD "0:myprogram",8 is the same as LOAD "myprogram",8 [↩]

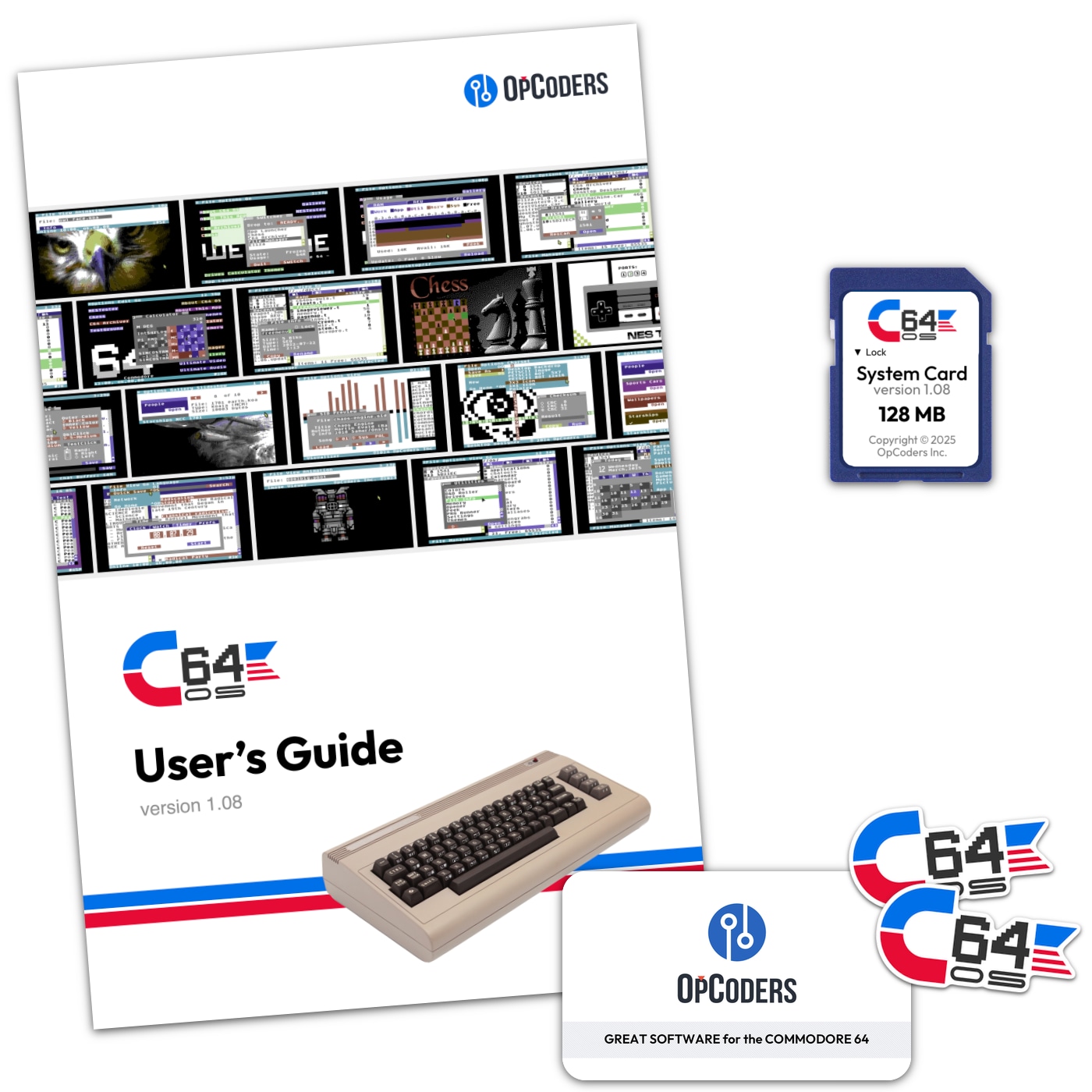

Do you like what you see?

You've just read one of my high-quality, long-form, weblog posts, for free! First, thank you for your interest, it makes producing this content feel worthwhile. I love to hear your input and feedback in the forums below. And I do my best to answer every question.

I'm creating C64 OS and documenting my progress along the way, to give something to you and contribute to the Commodore community. Please consider purchasing one of the items I am currently offering or making a small donation, to help me continue to bring you updates, in-depth technical discussions and programming reference. Your generous support is greatly appreciated.

Greg Naçu — C64OS.com